While this may simplify code management and reuse, it is constrained by the performance and scalability of today’s EDC systems, which were developed many years ago and designed for much smaller volumes of data. One approach to addressing these problems is to bring all the data into the EDC and implement both simple and complex checks within that platform. First, as non-EDC (laboratory, biomarker, imaging, patient-reported, etc.) data account for the majority of the information collected during a clinical trial, queries against those systems or vendors involve a different workflow from that used in the EDC (often relying on emails or telephone calls.) Second, whereas the EDC checks are typically implemented by the data managers who set up the electronic case report forms, the more complex checks and discrepancy logic are implemented by statistical programmers.

Managing this process is very inefficient and error prone. In both cases, the output of these checks is reviewed by data managers who delegate any suspect data to the appropriate parties for verification and correction. The latter usually require data to be combined from multiple sources and are implemented in SAS, R or Microsoft Excel, as they may require fairly complex logic. To aid in this process, the data are inspected through a number of programmed checks, which fall into two categories: (i) those implemented and managed within a particular source system (typically the EDC) and (ii) those implemented and managed outside of any individual source. That data undergo a significant amount of scrutiny, from ensuring that site personnel enter the information in a timely and complete manner, to supporting queries and other forms of communication to address concerns for accuracy and consistency, to facilitating multiple rounds of review, particularly for verification of correct transcription from the original source. Of central importance is the electronic data capture (EDC) system used to capture patient observations at investigational sites. That process involves many different groups, such as clinical investigators and site staff, patients, laboratory and imaging vendors, device and technology companies and more, entering data into a multitude ofdisparate systems. Data management plays a crucial role in this process, ensuring that the data collected is complete, accurate and delivered in a timely manner for analysis, submission and disclosure ( 1).

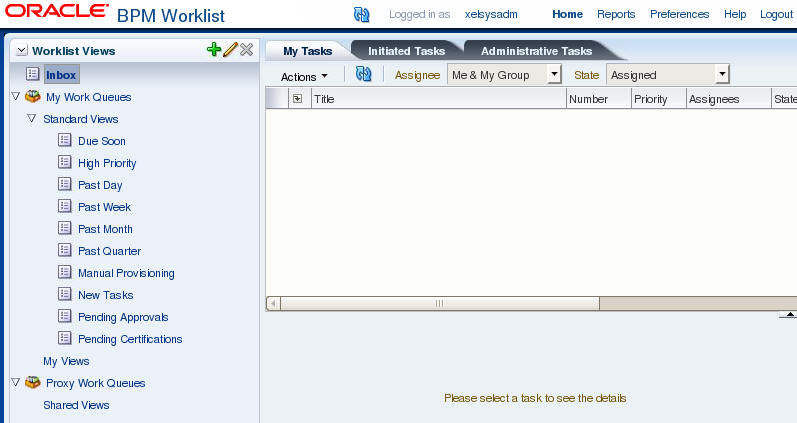

The goal of clinical trials is to demonstrate the efficacy, safety and comparative effectiveness of new investigational treatments or existing therapies that warrant further study. Our solution enables timely and integrated access to all study data regardless of source, facilitates the review of validation and discrepancy checks and the management of the resulting queries, tracks the status of page review, verification and locking activities, monitors subject data cleanliness and readiness for database lock and provides extensive configuration options to meet any study’s needs, automation for regular updates and fit-for-purpose user interfaces for global oversight and problem detection. Here, we introduce a new system for clinical data review that helps data managers identify missing, erroneous and inconsistent data and manage queries in a unified, system-agnostic and efficient way.

Traditionally, this data is captured, cleaned and reconciled through multiple disjointed systems and processes, which is resource intensive and error prone. Assembly of complete and error-free clinical trial data sets for statistical analysis and regulatory submission requires extensive effort and communication among investigational sites, central laboratories, pharmaceutical sponsors, contract research organizations and other entities.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed